There are two truths about our current state of data collection, synthesis and analysis: there is a lot of data and some of it is wrong.

Good data, whether it has to do with customer service topics or any other issue, is key to managing projects, assessing program performance, delivering services efficiently and avoiding fraud. Across every industry, data generation and collection is accelerating, but worse than having no data is having wrong data.

Two months ago, I shared a tale of discovering bad data in TSA customer service numbers and how TSA and the Department of Transportation reacted to my discovery. Ultimately, TSA admitted error and corrected its data. Problem solved…in that instance.

But I was left wondering what other invalid information might be lurking in TSA’s datasets. So I continued to sleuth the same TSA database. Here are the results of my sleuthing, as well as a lesson on why valid data is important for government agencies and customer service.

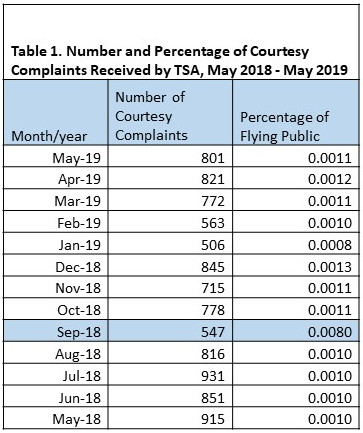

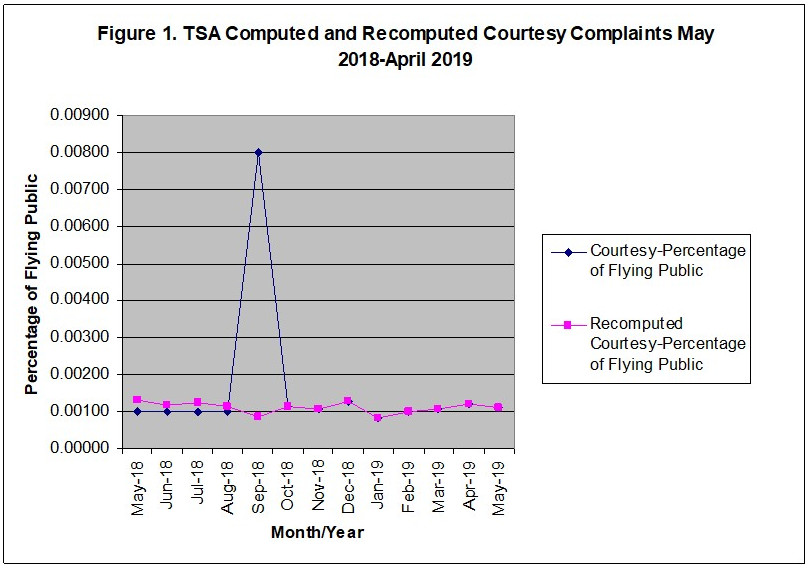

I reviewed courtesy complaint data submitted by travelers to TSA for the time period of May 2018 through May 2019 (Table 1). If we plot this data, as shown in Figure 1, it suggests that an important event happened in September 2018. The TSA complaint rate was abnormally and obviously high. But my research showed that not much happened policy-wise from a public-perception viewpoint. What could have caused this spike?

I decided to recalculate the monthly complaint rates and determined that for September 2018, the actual rate was 0.0008 and not 0.008 as reported.

Digging further, I also took a close look at the data published for November and December 2018, where exactly 66 million passengers were screened along with 53 million checked bags for each of the two months. In no previous instance had I seen identical numbers like these. In both cases, I needed TSA’s input to either verify (or more likely, correct) their data.

On August 2, I contacted the TSA Contact Center and explained that I was working on an analysis that draws from TSA data and asked for help with regard to these two issues. By August 5, I received a timely response from Latricia Deese, Customer Service Branch, Office of Civil Rights & Liberties, Ombudsman, and Traveler Engagement. She stated that my concern about the percentages was correct, that it was due to a clerical error and that a correction would be sent to the Department of Transportation. She also said that there were 66 million passengers screened in both November and December 2018, revealing that the baggage number is the same for both months because it is calculated from the throughput number. Since TSA estimates the number of bags screened based on the number of passengers screened, with the assumption that each passenger checks an average of 0.8 bags, the November and December bags-screened data were accurate. That there were exactly the same number of passengers screened across two months, well, it’s curious, to be sure.

At a superficial level, putting a decimal point in the wrong place or mixing up data reports month to month might not seem like a big deal. And that’s a problem, because it is a big deal. If we take only my recent exchanges with TSA on their data, we are left wondering what other “clerical errors” are treading on the accuracy of TSA’s data. How many clerical errors could there be, potentially? I found several just perusing a narrow set of customer service numbers. The implications are troubling.

Having bad data can be embarrassing and sometimes decisions made with bad information can have consequences worse than having no data. In some cases, services may be unnecessarily duplicated, programs may be actually more or less successful than they actually are, dollars can be wasted, and homeland security may be jeopardized. TSA deserves kudos for correcting their data each time I’ve pointed out a problem, but the agency still has a way to go, if for no other reason than there are almost certainly more data problems lurking and yet unidentified.

TSA has a lot of work ahead of it, but there are ways to improve. First, instead of publishing data one month at a time on a monthly basis, TSA should consider publishing three months at a time, every month and on a rolling basis. For example, instead of publishing data for June 2019 alone (making it laborious to compare data month to month), TSA should publish April and May data alongside it. This would allow both TSA and the consumers of its data to quickly identify whether there were anomalies in the information—and when it comes to TSA, as I’ve found, anomalies often indicate bad data.

Second, TSA should add a new column of data identifying compliments received. A 2015 compliments study states that focusing on customer compliments might be equally as important as focusing on complaints because having compliments data may assist TSA managers in motivating employees, help in retaining the workforce and even increase productivity. It might also help data consumers like me, because it allows for analyzing the correlation between compliments and complaints. That is, it becomes easier to identify whether certain factors (e.g., time of year, changes in policy) have an impact on homeland security, and in pursuing that analysis, bad data inevitably bubbles into view.

Last, from a policy perspective, the airlines are required to provide some compliment and complaint data to the U.S. Department of Transportation (DOT). For July 2019, the DOT Air Travel and Consumer Report is 55 pages long. Only one page of that data is provided by TSA. The balance between data made available to the public by the airlines (as required) and that made available by TSA seems skewed, and the limited information synthesized and released again makes it difficult to see the data points in context and probe their validity.

Ultimately, TSA is not alone in struggling with data veracity. As the public and private sectors aspire to collect and apply more data, errors will occur. We all make mistakes. Where organizations distinguish themselves is in how seriously they take their responsibility for disseminating accurate information. And when it comes to TSA, the implications of this responsibility could not be more serious, nor more important for our homeland security.